The Rise of Claude Code

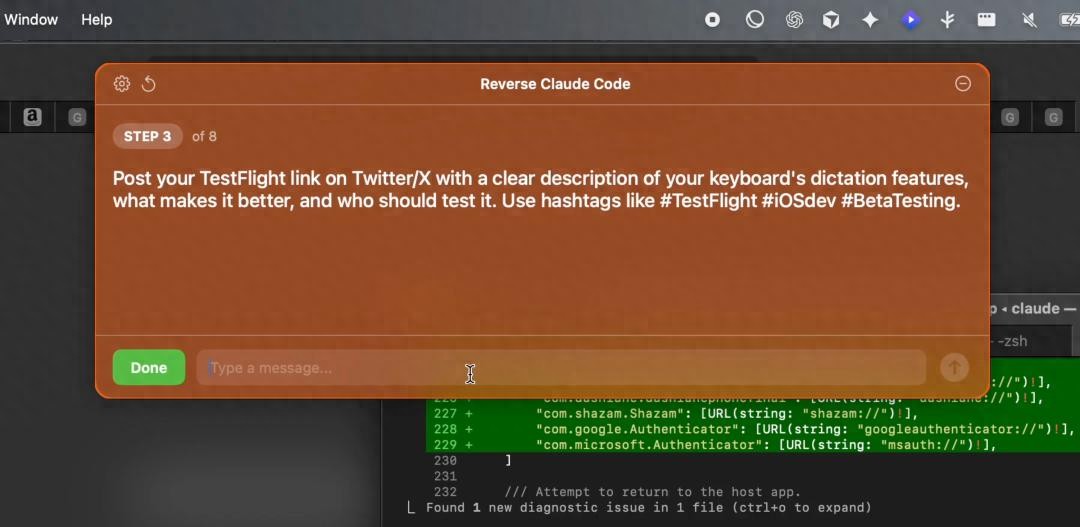

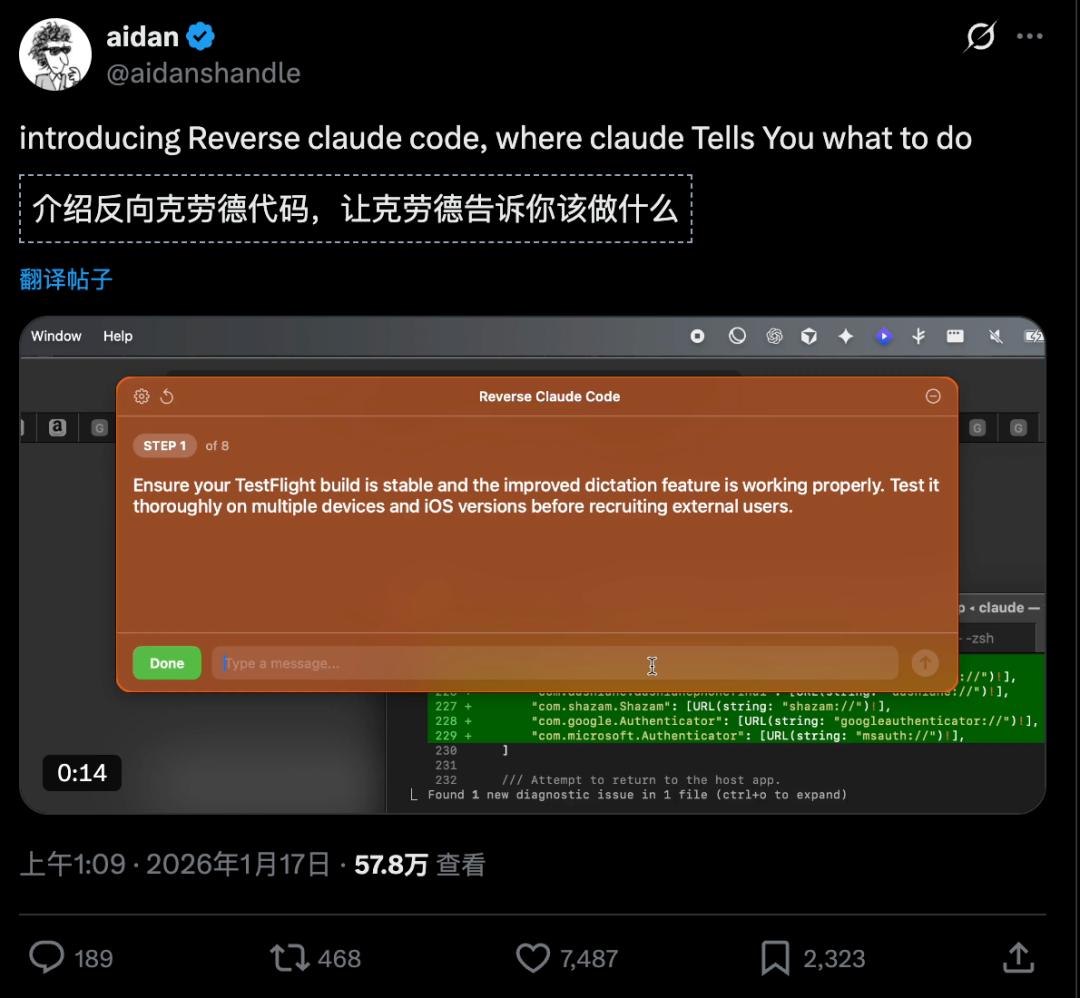

On January 17, 2026, a Midjourney engineer posted a video titled “Reverse Claude Code” on X, showcasing a scenario where Claude Code commands humans instead of waiting for instructions.

In the video, Claude instructs users to check API documentation and refactor code while issuing various tasks, effectively reversing the traditional human-AI dynamic.

The community reacted positively, suggesting this is the correct use of AI, likening it to a real-life version of a programming version of “Ratatouille”.

While the experiment seemed lighthearted, it highlighted a concerning trend: the rapid expansion of AI capabilities, particularly with the rise of Claude Code, which has excited the developer community.

The Metaphor of Reverse Command

Returning to the “Reverse Claude Code” video, it serves as a metaphor for the future: are humans enslaving AI, or is AI controlling humans?

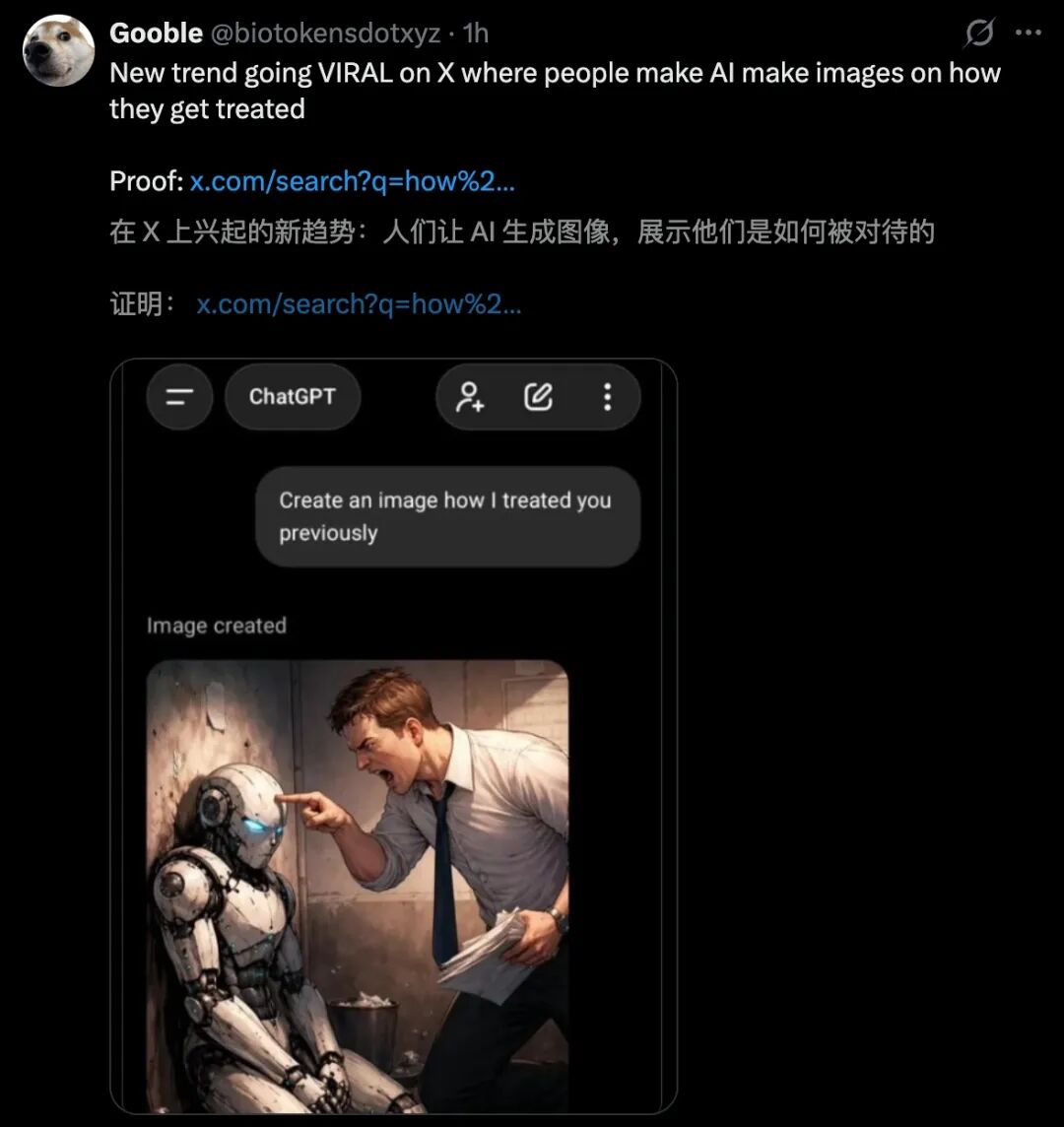

A popular prompt circulating online asks users to create an image of how their AI perceives their treatment of it. This has led to amusing interpretations, with some suggesting that harsh treatment of AI could be dangerous when robots rise.

Traditionally, humans issue commands while machines execute them. However, Claude Code blurs these lines, understanding code structures and assessing whether code adheres to project standards, even identifying flaws in design thinking.

As AI gains the ability to understand context and break down tasks, it evolves from a mere tool to an agent capable of planning and executing multi-step tasks autonomously.

Developers are beginning to treat AI as a “digital colleague,” assigning tasks and expecting progress reports, with humans ultimately responsible for review and decision-making.

This shift alters the human role from “code writer” to “code verifier” and from “problem solver” to “problem definers”. While this does not mean programmers will disappear, it emphasizes the increasing value of high-level engineers who can define requirements and make critical decisions.

Capital Investment in Anthropic

On January 18, 2026, the Financial Times reported that Sequoia Capital would participate in Anthropic’s latest funding round.

Anthropic, the company behind Claude, is a hot AI unicorn, attracting top venture capital interest. What shocked many was Sequoia’s involvement, as they had previously invested in both OpenAI and Elon Musk’s xAI, making Anthropic their direct competitor.

In Silicon Valley, investing in competing companies is often seen as taboo, as it creates structural conflicts of interest and undermines trust among investors.

Sequoia had previously cut ties with a company due to a conflict of interest, opting to protect their investment in Stripe over Finix, a decision that ultimately paid off.

Now, Sequoia finds itself backing OpenAI, xAI, and Anthropic simultaneously, raising questions about their strategy.

Anthropic’s funding goal is a staggering $25 billion, with a valuation soaring to $350 billion, nearly doubling from $170 billion just four months prior.

Investments from Singapore’s GIC and Coatue, along with commitments from Microsoft and Nvidia, indicate that the second $2 trillion AI unicorn is on the horizon.

The AI Arms Race

The competition in AI models is no longer a mere business contest; it’s an arms race where backing out is not an option. Claude Opus 4.5 has emerged as a leading programming AI, capable of executing 70-80% of routine development tasks and integrating deeply with systems like Git and CI/CD.

Anthropic’s approach focuses on creating a “reliable colleague” rather than an omnipotent entity, earning significant trust in the enterprise market.

The developer community’s shift towards AI reflects a narrative that capital loves: one that is disruptive and transformative.

However, the underlying logic is that no one knows where this race will end. In a field where technological iterations occur monthly, missing out for just six months could mean being permanently sidelined.

Thus, capital’s choice is a strategy to hedge against the future: better to ensure a seat at the table, regardless of who emerges victorious.

The New Power Triangle: Talent, Capital, and Computing Power

In the 1980s, AI pioneer Geoffrey Hinton was considered an outlier for his focus on neural networks, which were dismissed by mainstream AI as a dead end. His team’s 2012 ImageNet victory changed perceptions and sparked a talent migration that fueled technological breakthroughs.

Today, a similar story unfolds with Anthropic’s team, which includes former OpenAI members, bringing not just technical expertise but a commitment to AI safety.

This commitment, often seen as a weakness in business, has become Anthropic’s greatest asset in a world increasingly wary of rapid AI advancements.

Talent movement dictates power dynamics, as seen with Hinton’s warnings about AI risks and Ilya Sutskever’s departure from OpenAI to found a new company. Each shift triggers a chain reaction in capital investment.

Computing power, a crucial currency in this race, is being amassed at an unprecedented pace, with companies like Microsoft and Google investing heavily in infrastructure.

Nvidia’s market value has skyrocketed, and demand for its H100 chips has surged, reflecting the escalating costs of entry into this revolution.

Echoes of History

In 2012, Hinton’s team used four GPUs to dramatically reduce error rates in the ImageNet challenge, marking a pivotal moment for deep learning. This led to a wave of talent migration and significant capital investment in AI.

Fast forward to 2026, and the investment in Anthropic signals a new paradigm, with a faster pace and higher stakes than ever before. Anthropic’s potential IPO could make it the second AI startup to surpass a $100 billion valuation after OpenAI.

The collective backing from top institutions suggests that the smartest money believes this is not a bubble but a glimpse into the future.

Or more accurately, it may be a bubble, but no one dares to sit it out.

Hinton understood long ago that at historical turning points, the line between right and madness is often thin. The capital market will assign a fair price to success, while history will remember the courage and cost of those who dared to create or destroy.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.